The Dark Side Of Social Media

Chad M, Co Founder (IPA)

20 November 2025

I provide a lot of training to law enforcement agencies around the world. The bulk of that training is related to investigating incidents of online child exploitation. Working those types of cases was something that did for many years prior to retiring from law enforcement.

In my current role, as a co-founder at International Protection Alliance, train and support law enforcement investigators all over the world as they navigate these complex and technical types of cases. Because of that, have a lot of undercover accounts that maintain and use primarily for training and for supporting active criminal investigations.

These are accounts that are used to search for online predators, gather intelligence related to accounts, groups, and pages created by and/or used to exploit children. These things are not difficult to find for a trained investigator, they hide in plain sight on some of the most popular social media platforms used by over 2 billion users daily.

I don’t co-mingle undercover accounts with person social media accounts. That means that don’t use my personal social media to search for online predators or exploitative behaviors. Vice versa, don’t use my work related accounts to post or communicate personally. It’s a basic rule and it’s an important rule.

Because of this, my social media newsfeeds look quite different. My personal social media is littered with things that interest me personally, like food. And beer. Generally, food and beer and occasionally dogs. Because of that, I get notifications about new restaurants and pubs near me. I get notifications if a new dog park opens up near me. I see video after video of people eating street food or discovering dive bars or a new speakeasy. I see these videos because these are things that interest me.

Social media understands that these are things which will keep me engaged on their platform so they fill my newsfeed with this type of content. These are not necessarily from accounts or pages that I follow. Many of these are suggested accounts, suggested to me by whatever platform or application I’m using at the time. It’s helpful. It has directed me to many burgers and beers at places that I would not have found otherwise.

My undercover accounts also suggest content which plays over and over in my newsfeed. That content reflects the behaviors of the many accounts which I follow and communicate with, which are accounts created by and/used to exploit children.

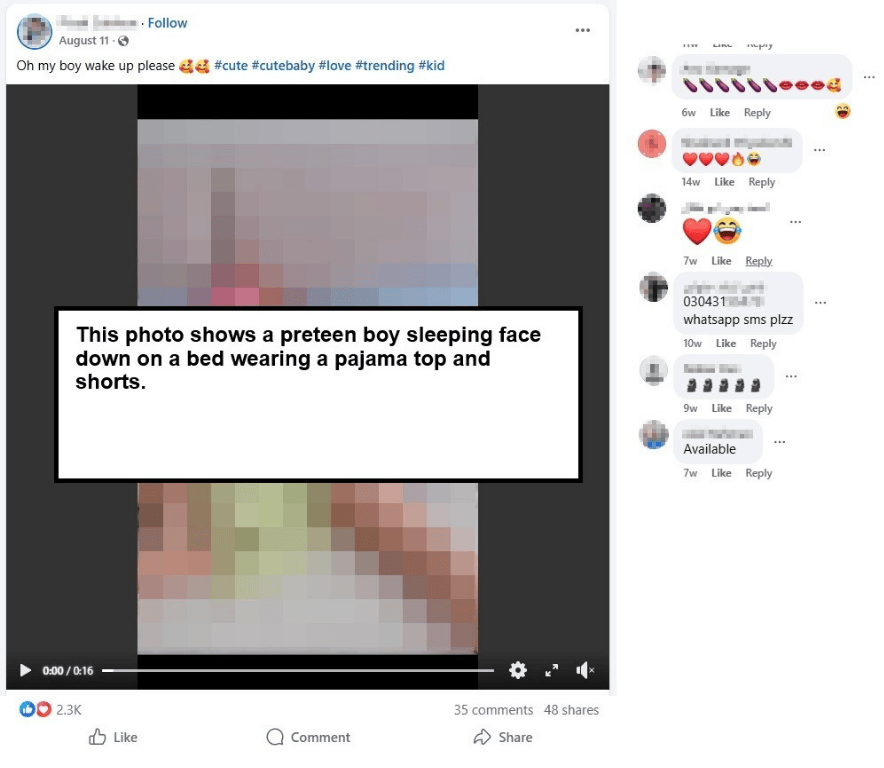

Many of these accounts are made up of thousands of users, many of them pedophiles. For example, a page may appear to be promoting child modeling or some other kid friendly activity, full of posts and photos of young children from all over the world. The comments however, tell a different story. (NOTE: THE ORIGINAL IMAGE POSTED CONTAINED NO NUDITY).

Many of these comments are sexually charged with inappropriate emojis. Others contain personal phone numbers of potential online predators looking for victims. It is very common for users to post links to private Telegram or WhatsApp group chats where illegal images are shared and sold in encrypted chats.

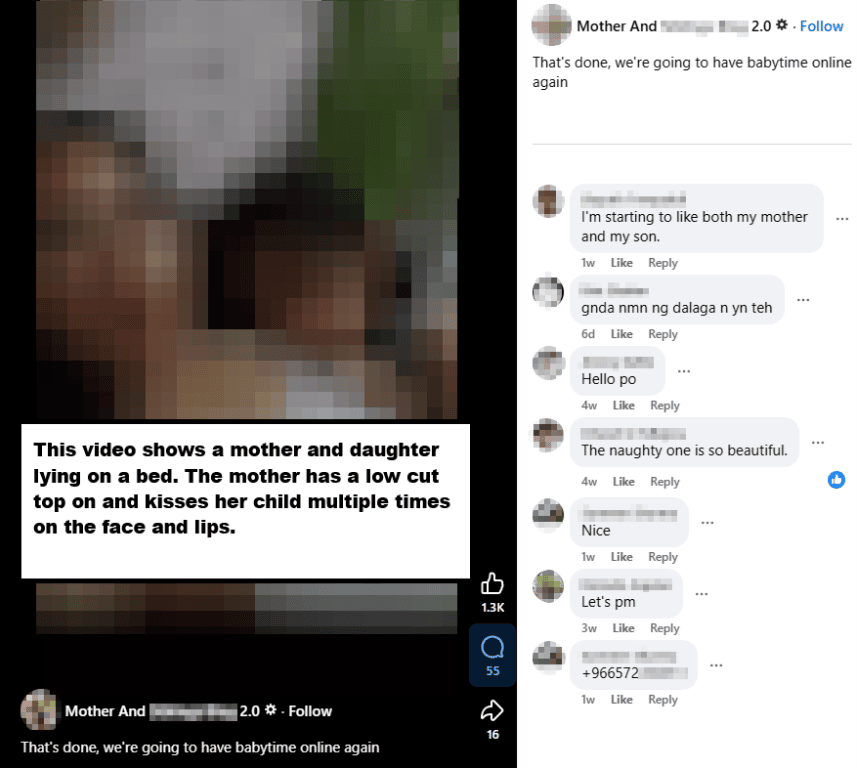

My undercover newsfeeds don’t contain tips and directions to pubs and restaurants, instead they contain live-streaming videos of mothers breastfeeding toddlers to hundreds and thousands of viewers.

They contain video after video of underage, partially-dressed children dancing provocatively at home, or parents bathing children, or adult men and women kissing children. None of it is openly illegal, but all of it is sexually charged. All of it is suggested and displayed in an attempt to keep me engaged and to connect met to other like- minded account holders.

The algorithms built to keep people using these applications do not care about whether the behaviors it’s monetizing is legal or illegal. The algorithms do not care whether the behaviors are moral or immoral. The algorithms only care about keeping users active and engaged.

These platforms are no longer just providing a service to allow users to post content. These platforms are suggesting and directing content to users and in some cases, it’s content like you see below. (NOTE: THE ORIGINAL VIDEO POSTED CONTAINED NO NUDITY)

This is the dark side of social media. This isn’t the dark web. These are public groups that exist to promote exploitative behavior. The children in these videos are being exploited by their parents for popularity and for “clicks” and “likes”.

The child in the above video appears to be approximately 6-8 years old. These sites are the breeding grounds for Child Sexual Abuse Material (CSAM) distribution groups hosted on more secure and end-to-end encrypted applications like Telegram and WhatsApp.

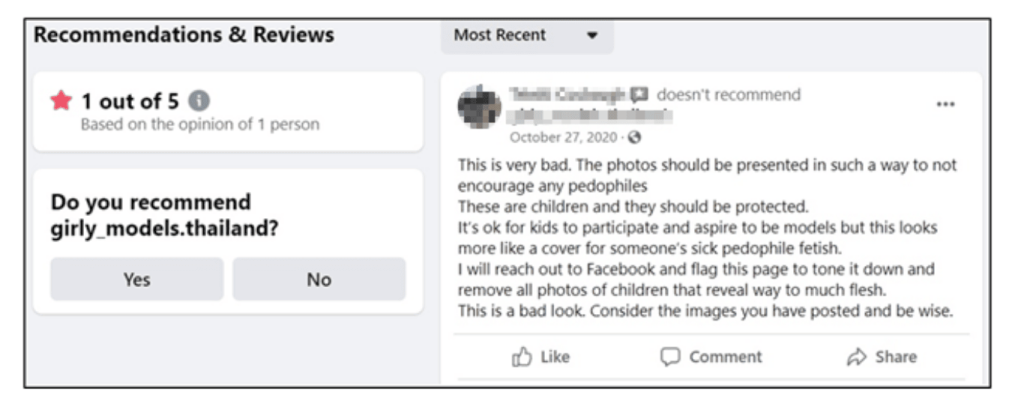

Most social media platforms will tell you that these accounts do not violate the rules or meet the minimum criteria for suspension or deactivation. Even after being reported, many of these accounts thrive.

The review shown above must be similar to many reviews and complaints that social media providers receive everyday with over 2.2 billion daily users. The issue isn’t finding the content and reporting it. The issue is that nothing is being done about it. Instead, the providers hide behind Section 230 which provides them immunity from content posted by its users. But Section 230 doesn’t provide them immunity for suggesting the content to other users or for designing algorithms to distribute the content to other “like-minded” users.

The comment above was left on a page in 2020. That page was created and moderated by the father of 2 young girls in Southeast Asia. That father, currently incarcerated, was eventually charged with the online exploitation and sexual abuse of those children who were age 7 and 10 at the time of his arrest. Every single time a child accesses the internet, they are at risk.

There are no safe havens on the world wide web, and that includes any and all social media sites/applications. Don’t fall into a false sense of security just because you’ve been using social media for years and all that you see in your newsfeed is American football and travel content. Social media may very well be responsible for you discovering your family’s next international vacation destination, but it’s also just as likely to direct a pedophile to his next cheerleading competition as well. Be careful what you post because you are not in full control of who sees it.

When it comes to protecting your children, you cannot rely on online service providers to police their own networks and shield kids from inappropriate content. Instead, the content is promoted and suggested, with full immunity from prosecution, and will be until we stand up as a society and start holding providers accountable or we stop using their applications.

#internationalprotectionalliance #www.protectall.org #protectall #IPA